When Your IA Data Doesn’t Show a Clear Trend: A Calm, Practical Guide

Take a breath. One of the most common moments of panic for IB DP students is opening a dataset and seeing a scatter of points that doesn’t line up with the neat trend you expected. That jolt is normal—science and investigation rarely follow a straight line. What matters is how you respond. This guide walks you through practical checks, analytic choices, write-up language, and design pivots so an ambiguous result becomes evidence of careful thinking rather than a failed experiment.

Whether you’re working on an Internal Assessment (IA), an Extended Essay (EE) with experimental work, or preparing TOK links that explore the nature of evidence, the same principles apply: diagnose the source of ambiguity, choose tools that fit your data, and write honestly and analytically about what the data can and cannot show. Below you’ll find step-by-step actions, quick statistical guides, write-up phrases you can adapt, and realistic ways to salvage a project while staying academically rigorous.

First steps: immediate checks before you change your question

Quick diagnostic checklist

Before you reach for new equipment or rewrite your research question, run these basic checks. Often the problem is something simple and fixable.

- Data entry: Are numbers copied correctly? Check decimals, commas, and unit conversions. One misplaced decimal can flatten every trend.

- Units and scales: Are all values in the same unit system and scale (e.g., seconds vs minutes, g vs mg)?

- Instrument calibration: Were devices zeroed and calibrated? A systematic offset can mask relationships.

- Replicates and sample size: Did you record enough independent trials? Too few replicates increase variability.

- Protocol consistency: Were conditions controlled (temperature, timing, technique) across trials?

- Outliers and entry errors: Identify obvious outliers, but don’t delete them without reason—record and justify any exclusions.

- Randomization and order effects: Could time-of-day or order of trials explain noise?

Quick wins you can try now

- Re-plot your data with a simple scatter plot and error bars or boxplots to reveal spread.

- Recalculate a small subset manually to verify formulas and derived columns (means, rates, percentages).

- Check raw observations and lab notes—sometimes the explanation is written in a margin note.

- If possible, rerun a single trial under carefully controlled conditions to compare.

Use a diagnostic table to prioritize fixes

When time is limited, a short table helps you decide what to do first. Here’s a compact checklist you can adapt for your IA lab book.

| Symptom | Possible cause | Immediate action | When to consult your teacher/tutor |

|---|---|---|---|

| Flat scatter with high variance | High measurement error or too-small effect size | Check precision of instruments; add replicates; increase resolution | After 1–2 repeat checks or if calibration is unclear |

| One or two extreme points | Recording mistake or rare experimental error | Verify raw notes; rerun if possible; record justification for exclusion | If exclusion changes conclusions materially |

| Nonlinear pattern | Relationship not linear or scale mismatch | Try transformations, non-linear fit, or alternate independent variable | If unsure which statistical approach to use |

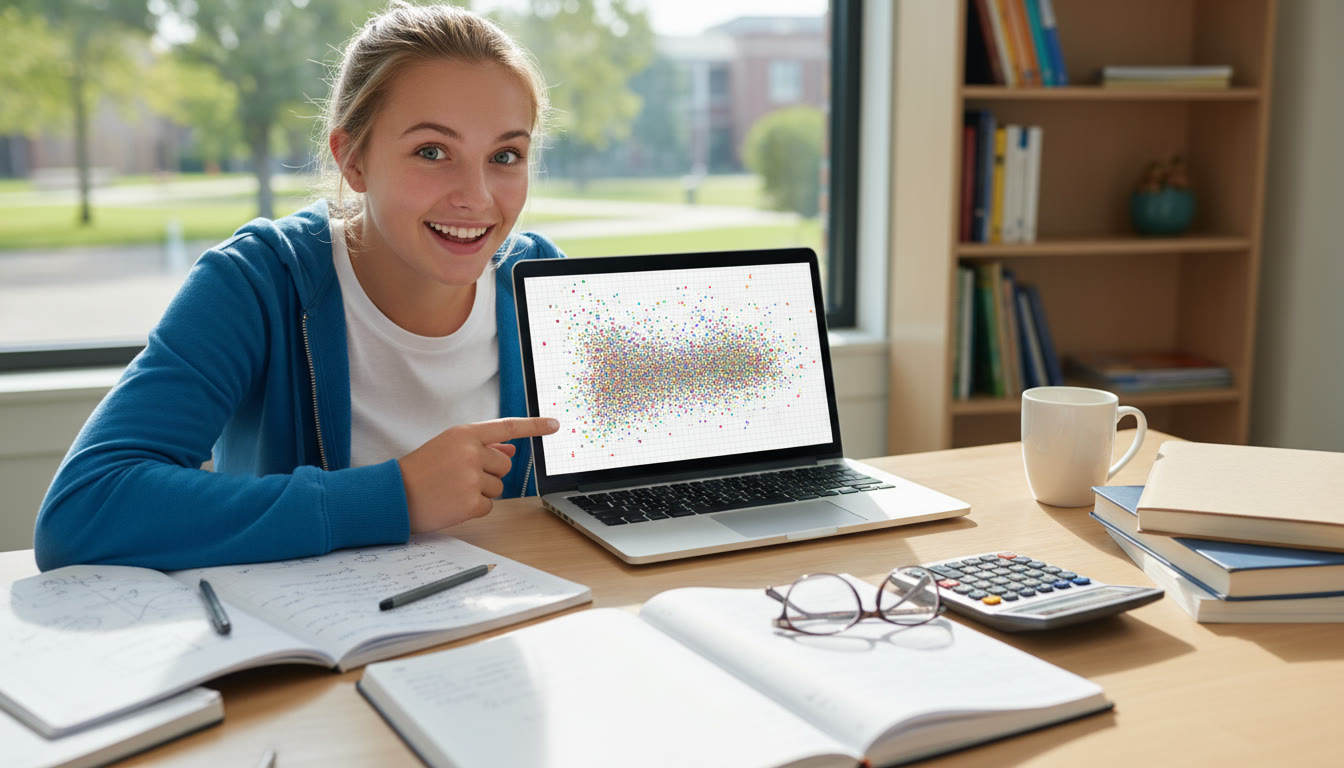

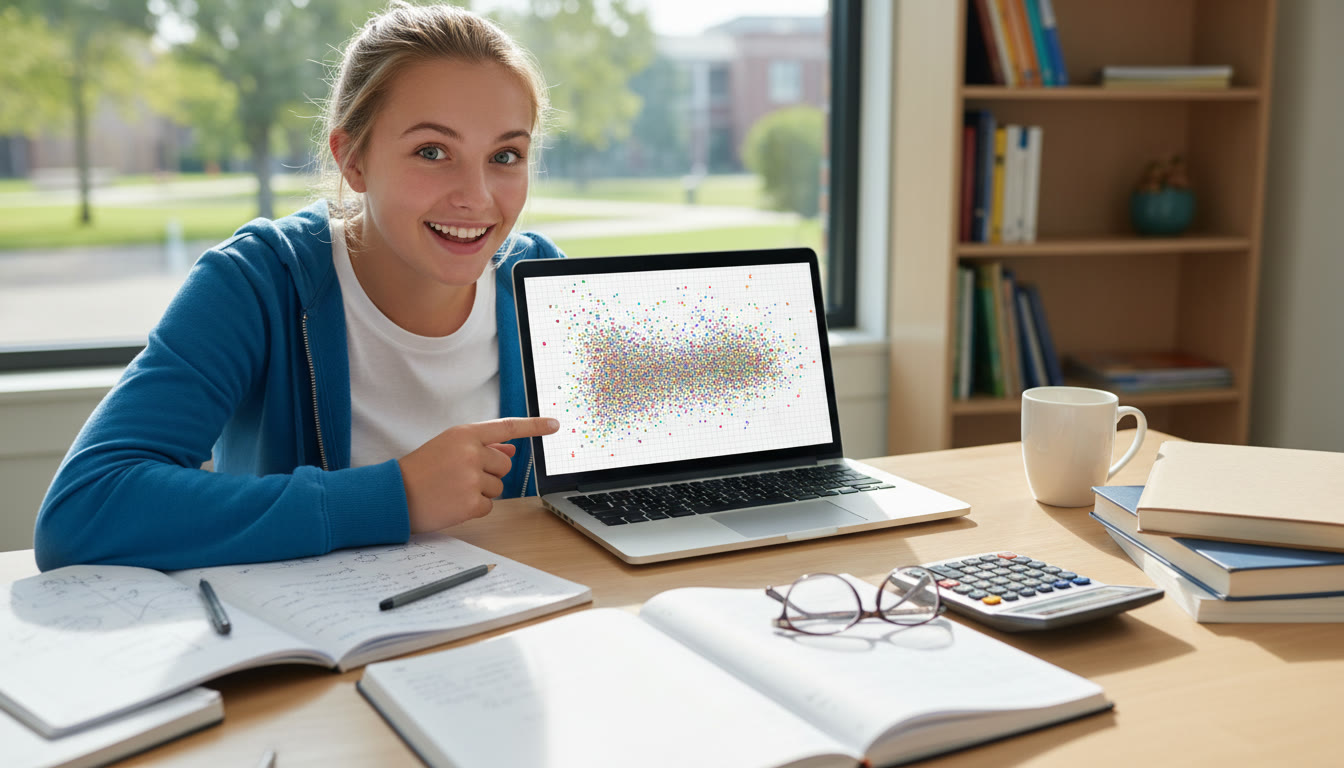

Visual instincts: make the data talk

Graphs are your first line of insight. Don’t rely solely on summary statistics—the shape of the data carries context that numbers can hide.

- Scatter plots: Plot raw pairs first. Use faint points when many overlaps occur and add jitter if data are discrete.

- Error bars and boxplots: Show variability of replicates clearly. Two means with overlapping error bars often explain a lack of clear trend.

- Residual plots: After fitting a model, plot residuals against predicted values or the independent variable. Patterns in residuals mean the model is a poor fit.

- Smoothing: Add a lowess/LOESS curve or a local regression to see if a subtle non-linear trend exists.

Good visualization often points to whether the problem is noise, a wrong model, or an underlying confounder.

Statistical choices: pick the right lens for your data

Different tests answer different questions. Picking the wrong one can make a genuine effect disappear or make noise look meaningful.

Correlation and regression—know what each tells you

Pearson correlation and linear regression assume linear relationships and normally distributed residuals. If those assumptions don’t hold, a Pearson r or a linear slope can be misleading. Spearman rank correlation is robust to monotonic but non-linear relationships and to outliers. Always check scatter plots and residuals before reporting statistical results.

Transformations and robust options

Some common remedies:

- Transformations (log, square root, reciprocal): Useful when variance grows with the mean or when relationships are multiplicative rather than additive.

- Non-parametric tests: Use when assumptions are violated—Mann–Whitney, Kruskal–Wallis, or Spearman’s rho can be appropriate alternatives.

- Bootstrapping and permutation tests: These resampling methods estimate confidence without strict distributional assumptions; they’re particularly handy with small sample sizes.

Statistical choices at a glance

| Data/relationship | Recommended approach | What it reveals | Notes |

|---|---|---|---|

| Continuous variables, linear look | Pearson correlation, linear regression | Strength and slope of linear relationship | Check residuals and normality |

| Continuous but non-linear or ordinal | Spearman correlation, non-linear fit | Monotonic relationships or curve shape | Less sensitive to outliers |

| Small n, unknown distributions | Bootstrap CIs, permutation tests | Distribution-free confidence and p-values | Computationally simple with modern tools |

When the messy result is real: how to report honest uncertainty

It’s tempting to smooth away doubt, but IB assessors value transparency and critical thinking. A result that shows no clear trend can be as valuable as a clear one—if you treat it analytically.

- Report raw and processed data: Include your raw data tables in an appendix or the methods section, and show processed data in figures.

- Present alternative analyses: If both Pearson and Spearman lead to different conclusions, show both and explain why one is more appropriate.

- Quantify uncertainty: Use error bars, standard deviations, and confidence intervals. If a correlation coefficient is low and the CI includes zero, say so plainly.

- Discuss plausible sources of variability: Technique, environmental noise, sampling bias, or a small effect size all deserve consideration.

Language that works in IAs, EEs, and TOK

Polished wording helps you be precise without overstating. Adapt these phrases to your context:

- “The data do not indicate a clear monotonic trend between X and Y under the conditions tested.”

- “No statistically significant relationship was observed (see confidence intervals), suggesting that variability may mask any small effect.”

- “Possible sources of variability include …; further investigation with increased replication or improved precision is recommended.”

- “These results illustrate a limitation of the experimental design: the chosen range/scale/precision may not capture the phenomenon of interest.”

Rethinking the design or research question

Sometimes the right choice is not more analysis but a redesign. That can be a small pivot rather than a complete restart.

- Change the scale or range: If the independent variable was too broad or too coarse, narrowing intervals can reveal a trend.

- Increase resolution: Use more measurement points, smaller increments, or more replicates to reduce sampling noise.

- Control confounders: Add controls, randomize order, or stabilize environmental variables.

- Shift focus: If a continuous trend is absent, consider reframing the question into a comparative or threshold-based investigation.

Practical design pivots you can justify in an IA

Small, well-justified changes demonstrate thoughtful planning. For example, in a biology IA that looked for a linear enzyme-activity vs temperature relationship but found no trend, you could justify testing a narrower temperature range that brackets the expected optimum, explaining the biological reasoning and citing variability concerns.

Case studies: concrete examples and responses

Below are short, practical scenarios with steps you might take. These aren’t exhaustive but are realistic routes students follow when data are ambiguous.

Biology IA: enzyme activity vs temperature shows no clear peak

Possible issues include too-large temperature increments, enzyme denaturation, or timing inconsistencies. Steps: check timing protocol, confirm reagent concentrations, rerun at narrower temperature intervals near the suspected optimum, and include more replicates. In the write-up, present both the original and refined data and explain why the second approach was necessary.

Physics IA: pendulum period vs amplitude shows unexpected scatter

Small-amplitude approximations break down with larger swings, friction and air resistance matter, and timing errors are common. Try measuring more swings per timing interval, use video analysis for better precision, and control for amplitude. If scatter persists, include a discussion about the limits of the simple theoretical model used.

Chemistry IA: titration end-point varies between trials

Inconsistent technique, concentration errors, or indicator wrongness can all create scatter. Check burette calibration, prepare fresh standard solutions, and standardize technique. If the end-point method remains variable, consider switching to instrumental detection (if allowed) or reframing to compare methods.

EE survey/field data: no trend across demographic groups

Investigate sampling bias and whether the survey instrument measures what you intended. Check sample sizes across groups. If the survey instrument is unreliable, include validation steps or triangulate with another data source. Honest reporting of sampling limitations is critical.

How to present non-trend results to IB assessors

Assessors look for scientific thinking: clear method, accurate data presentation, critical evaluation, and understanding of uncertainty. A “null” or ambiguous result can score highly if handled well.

- Structure results clearly: raw data, processed data, and figures with concise captions.

- Interpret conservatively: link claims directly to data and acknowledge uncertainty.

- Show evaluation: list specific improvements, quantify how they would reduce uncertainty, and prioritize them.

- Reflect on personal engagement: explain decisions you made and what you learned about the investigative process.

Notes on academic integrity

Do not alter data to fit expectations. Never omit inconvenient data without transparent justification. If an outlier is removed, document why and show analyses with and without it. These practices demonstrate responsibility and critical thinking—qualities IB values highly.

When and how to ask for help

Teachers and tutors can provide perspective, but approach them prepared: show your raw data, a figure, and the diagnostic checks you’ve already done. If you need targeted support on analysis or write-up, consider working with someone who can guide statistical choices or suggest design pivots. For example, Sparkl‘s personalized tutoring can be useful for clarifying which statistical tests fit your data, creating tailored study plans, or strengthening your evaluation and write-up. A focused session that reviews raw data, methods, and assessment criteria often converts uncertainty into clear next steps.

Final checklist before you submit

- Have you shown raw data and the processed figures that led to your conclusions?

- Have you justified any exclusions, transformations, or statistical choices?

- Have you discussed sources of uncertainty and suggested realistic improvements?

- Does your language avoid overclaiming while clearly stating what the data support?

Closing thought

An IA or EE that honestly engages with messy data demonstrates a deeper scientific mindset than one that only reports tidy results. The ability to diagnose, adapt, and critically reflect—supported by clear visuals and appropriate statistics—is exactly what IB assessors reward. Treat a non-trend not as failure but as an opportunity to show careful reasoning, methodological awareness, and thoughtful evaluation.

No Comments

Leave a comment Cancel