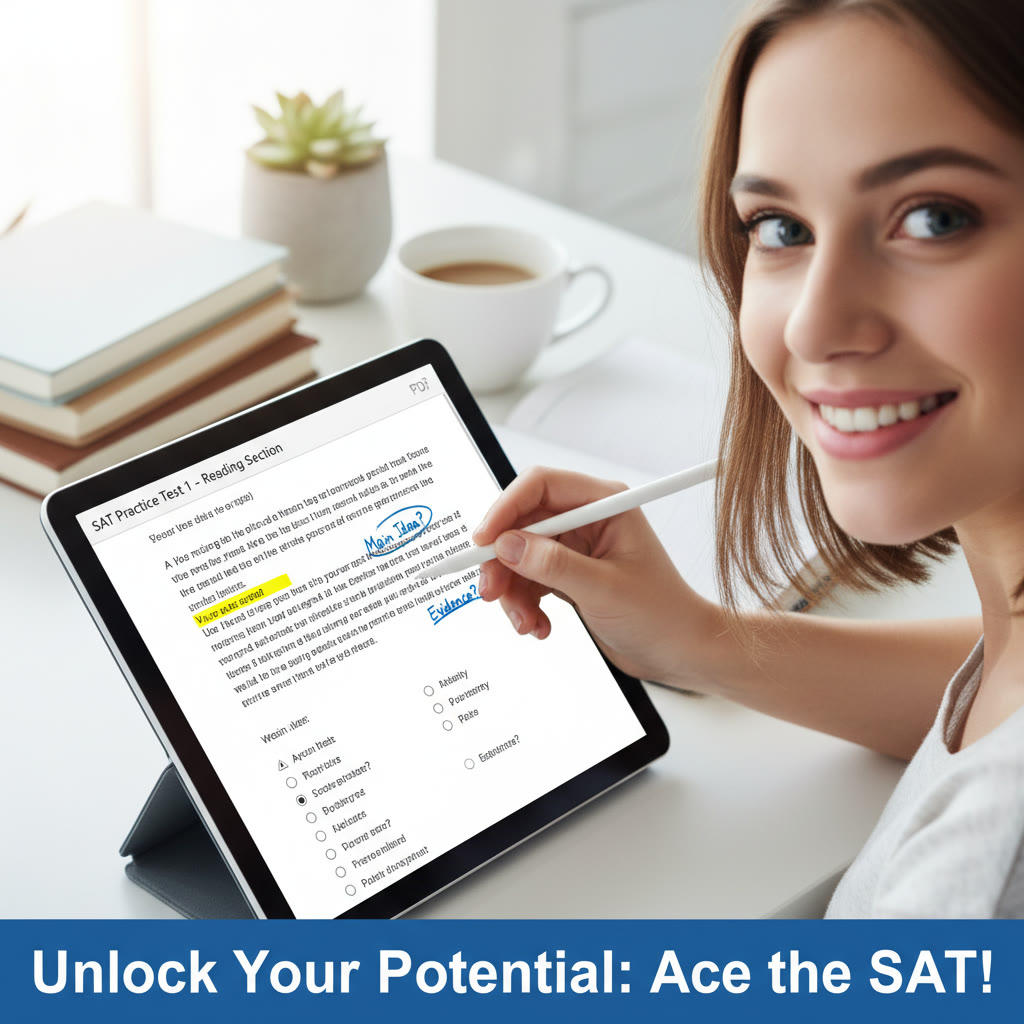

Why reviewing SAT practice tests digitally matters

You took a practice SAT. You checked your score. Now what? If your reaction is a mix of relief, frustration, and curiosity, you are in the right place. Reviewing practice tests is where real progress happens—not just taking more tests but learning from each one. Going digital makes that review faster, clearer, and far more actionable. It lets you spot patterns across dozens of questions and several test sessions, which is exactly the kind of insight that turns random effort into efficient gains.

From one-off mistakes to repeatable patterns

A single missed question is a story. A hundred missed questions across ten tests reveal a genre: careless arithmetic, recurring grammar traps, timing breakdowns, or misread question stems. A digital workflow helps you capture those stories, tag them, and analyze them across time. You can build up a record of what actually holds you back instead of guessing.

Set up a simple, reliable digital review workflow

Start with three principles: record, categorize, and act. Record every mistake or uncertain question. Categorize it by cause and content. Act by scheduling targeted practice. That triad is the backbone of a digital review system that scales with the number of tests you take.

Step 1: Capture the raw data

- Save each practice test in a single folder so you can return to the original PDF or screenshot. Organize by date and test number.

- Use a consistent file naming pattern: testdate_testname_section (for example: 2025-04-10_practice1_math.pdf). That consistency will pay off when you sort and filter.

- Record your overall score and section scores immediately. If you can, export or copy the official answer sheet data into a spreadsheet.

Step 2: Log every question that needs attention

For each question you missed or guessed on, log these fields digitally: test ID, page/number, question type, concept tested, your selected answer, correct answer, error type, time spent, and notes. The best tools for this are spreadsheets and a lightweight database, but a well-structured notes app or digital notebook works too.

Step 3: Tag smartly

Tags are how you turn rows of data into stories. Use a limited tag set—don’t go overboard. Examples:

- Concept tags: linear equations, functions, grammar-rules, command-of-evidence, reading-inference.

- Error tags: careless, incomplete-setup, wrong-strategy, timing, vocabulary.

- Skill level tags: conceptual, procedural, application.

Keep the tag list to 20 or fewer so patterns stand out rather than disappear in noise.

Tools and formats that make digital review fast

There is no single perfect tool. What matters is that your tools let you search, filter, visualize, and export. Commonly used combinations include a PDF reader for annotations, a spreadsheet for tracking, and a notes app for reflections. If you’re using a personal tutor or a service, sharing your digital log makes one-on-one sessions more productive.

Practical toolset

- A PDF annotator: highlight mistakes, add short notes, and timestamp sections you want to revisit.

- A spreadsheet: record question-level data. Use columns for date, test, section, question number, correct/incorrect, tag(s), time spent, and follow-up action.

- Visualization quickies: small charts or conditional formatting in the spreadsheet reveal trends at a glance.

What to log: fields that reveal trends

Not all data is equally helpful. The right set of fields helps you answer these three questions: What am I missing? Why am I missing it? How fast is my problem improving?

Essential columns

- Test date and version: chronological analysis is essential for trend detection.

- Section and question number: isolates where the issue lives (Reading, Writing, or Math).

- Topic or standard: algebra, geometry, conditional reasoning, sentence structure, etc.

- Error type: careless, concept, misread, time-pressure, strategy, or vocabulary.

- Time spent: how long you spent and whether you ran out of time on a section.

- Resolution action: planned remediation, whether you reworked the problem, or reviewed a concept video.

Example dataset: a simple table to track five practice tests

Below is an example of a compact table you can keep in a dashboard. It balances readability and depth so you can spot a downward or upward trend in a glance.

| Test | Date | Total Score | ERW | Math | Percent Wrong: Algebra | Percent Wrong: Reading | Average Time/Question |

|---|---|---|---|---|---|---|---|

| Practice 1 | 2025-01-10 | 1220 | 600 | 620 | 28% | 22% | 1:30 |

| Practice 2 | 2025-02-05 | 1250 | 620 | 630 | 24% | 20% | 1:25 |

| Practice 3 | 2025-03-01 | 1280 | 630 | 650 | 20% | 18% | 1:20 |

| Practice 4 | 2025-04-12 | 1290 | 630 | 660 | 18% | 17% | 1:18 |

| Practice 5 | 2025-05-20 | 1330 | 660 | 670 | 12% | 15% | 1:15 |

This table suggests improving math and ERW scores, with algebra errors dropping fastest. That high-level signal tells you where remediation is working and where to keep investing effort.

How to interpret the trends you find

Turning numbers into action requires interpretation. I’ll walk through the most common patterns and what they typically mean.

Pattern: Slow, steady score improvement

What it means: Your study routine is working. Trends like a rising moving average across tests usually indicate that small, consistent fixes—targeted content review and timed practice—are adding up.

Action: Continue the approach, but look for lagging subtopics. If overall Math is up but algebra stubbornly lags, shift some study time to algebra drills.

Pattern: Up-and-down scores with stable weaknesses

What it means: You may be inconsistent in approach or face timing issues that vary test to test. Stable weaknesses suggest conceptual gaps that surface regardless of test conditions.

Action: Use your logs to isolate error types. For conceptual gaps, return to fundamentals and use targeted problem sets until error rates drop consistently.

Pattern: Many careless errors early, fewer later

What it means: Your attention and checking strategies are improving, often as a result of simple habits like marking eliminated answers or re-evaluating arithmetic steps.

Action: Keep a small, repeatable checking routine and make it a habit. Consider adding a ‘final 5 minutes’ checkpoint at the end of each section.

Deep dives by section: what to look for

Math

In Math, track mistakes by topic (algebra, functions, geometry) and by error type (misread, conceptual, arithmetic, or strategy). Timing matters—are you losing points because you rush or because you can’t decode the structure of the problem?

- If conceptual errors dominate, build a focused mini-unit: 20 problems on that concept until your error rate drops by half.

- If timing is the issue, practice with a tight timer on small clusters of questions (sets of 5–8) to build pacing without overwhelming yourself.

Reading

For Reading, log question types: main idea, inference, evidence, or vocabulary-in-context. Note whether you miss questions because you skimmed too quickly or because the passage required multi-paragraph integration.

- Missed inference questions: practice paraphrasing paragraphs and mapping how details support claims.

- Missed evidence questions: train the habit of linking claims to exact lines and annotating passages with a quick margin code (C for claim, E for evidence, A for author’s tone).

Writing and Language

In W&L, errors cluster around grammar rules, usage, or expression of ideas. Tag by grammar concept (subject-verb agreement, modifier placement, parallelism) and by whether the correct choice is stylistic or grammatical. Many students miss the subtle preference choices; the right remediation is both rule review and comparison drills.

Using visualizations to make trends jump out

Numbers behind the scenes are great, but a small chart is the fastest way to notice a trend. Use simple line charts for score progression and bar charts for error categories. Conditional formatting in a spreadsheet is a low-effort way to highlight hot spots: red for repeated errors above a threshold, yellow for moderate, green for improving areas.

Two quick visual tricks

- Rolling average: a three-test moving average smooths noise and shows true direction.

- Heatmap by topic: a matrix where rows are tests and columns are topics, colored by percent wrong. This quickly shows whether a topic is persistently weak.

From trend to study plan: prioritize ruthlessly

Once you know your error landscape, prioritize work that closes the biggest gaps fastest. That often means tackling the few topics that produce the most wrong answers. Use an 80/20 lens: 20% of topics cause 80% of errors. Reallocate study time accordingly.

A sample prioritized action plan

- Week 1: Concept reinforcement for high-error topic (e.g., quadratic functions). Daily 30-minute focused practice on just that topic.

- Week 2: Mixed practice sets including the repaired topic and timed sections to rebuild stamina.

- Week 3: Full practice test to measure progress and record new data.

- Ongoing: Weekly review of your digital log to adjust focus.

How to structure review sessions so they scale

Set aside three kinds of review: quick daily checks, focused weekly deep dives, and full exam retrospectives after every full practice test.

Daily (15–30 minutes)

- Rework 5–10 questions you logged in the previous week.

- Practice micro-skills: a set of grammar edits or 10 algebra problems.

Weekly (1–2 hours)

- Analyze your spreadsheet for the week: which tags popped up most?

- Create a 3-step plan for the coming week centered on that tag.

Post-test retrospective (45–90 minutes)

- Enter every missed question into your log with tags and notes.

- Update charts and the moving average and draft a focused 2-week study plan.

How tutoring and personalized guidance can amplify digital insights

Digital review makes your data talk. A tutor translates that talk into targeted learning strategies. For example, if your digital log shows recurring timing issues in math but conceptual mastery when untimed, a tutor can help redesign your test-taking strategy and practice structure. Sparkl’s personalized tutoring fits naturally into this workflow: one-on-one guidance can interpret trends, craft tailored study plans, and use AI-driven insights to prioritize the highest-impact work.

What personalized tutoring adds

- Expert diagnosis: tutors read your data and help separate noise from meaningful patterns.

- Tailored study plans: instead of one-size-fits-all, you get an evolving plan based on your logs.

- Accountability and calibration: regular check-ins that adjust pacing, technique, and strategy based on recent tests.

- Smarter practice: tutors can provide targeted practice that addresses the exact concepts you’re missing, accelerating the improvement you see in your digital trend charts.

Common pitfalls and how to avoid them

Even with a great system, students can fall into traps. Here are the big ones and how to dodge them.

Pitfall: Recording everything but doing nothing with it

Logging without action is busywork. Make sure every logged mistake has a follow-up: a mini-lesson, 10 practice problems, or a correction note you re-test later.

Pitfall: Too many tags and too little focus

Tag paralysis is real. Keep a manageable set of tags so your patterns become visible rather than lost in detail.

Pitfall: Overfitting to a single test

One anomalous test—maybe you were sick, distracted, or rushed—shouldn’t drive a complete change in strategy. Use moving averages and multiple tests before making major shifts.

Real-world example: Sarah’s six-week digital turnaround

Sarah started with a 1150 diagnostic. Her digital log showed three repeated signals: algebra errors, careless arithmetic, and running out of time on the second math block. Working with a tutor and a simple spreadsheet, she logged every error and scheduled micro-lessons. The tutor used her data to create a weekly plan: targeted algebra drills, timed practice sets focusing on pacing, and a daily five-minute arithmetic check to reduce careless mistakes. In six weeks her practice-test average jumped to 1310, with algebra errors cut by more than half. The digital log made it obvious where to concentrate effort, and one-on-one guidance helped her close gaps faster.

Practical checklist: your first digital review session

- Save the original test file in a clearly named folder.

- Enter the test date and section scores into your spreadsheet.

- Log every wrong or guessed question with topic, error type, and time spent.

- Tag each entry with one remediation action (video, problem set, tutor session).

- Update your dashboard chart so you can see overall direction.

- If you have a tutor, share the log before your next session so the meeting starts from insight, not from recounting mistakes.

Final thoughts: make digital review a habit, not a chore

Looking at data can feel clinical, but this is the warmest form of efficiency: you get more of what matters out of the time you already put in. Build a habit: 10 minutes after every practice test to log, 30 minutes weekly to analyze, and a monthly snapshot to re-direct study time. Over a few months those small, regular steps compound into real score gains.

Digital review gives you a map. Your study plan, practice, and occasional help from experts—like a tutor who knows how to read and act on your data—are the travel companions that get you there faster. With a thoughtful digital workflow you stop guessing and start improving. Take your next practice test, open your spreadsheet, and see what the data asks you to do next.

No Comments

Leave a comment Cancel